For decades, the gap between robotic capability and human dexterity has been measured not just in hardware but in adaptability. Machines could repeat. Humans could improvise. That distinction is narrowing faster than most people expected.

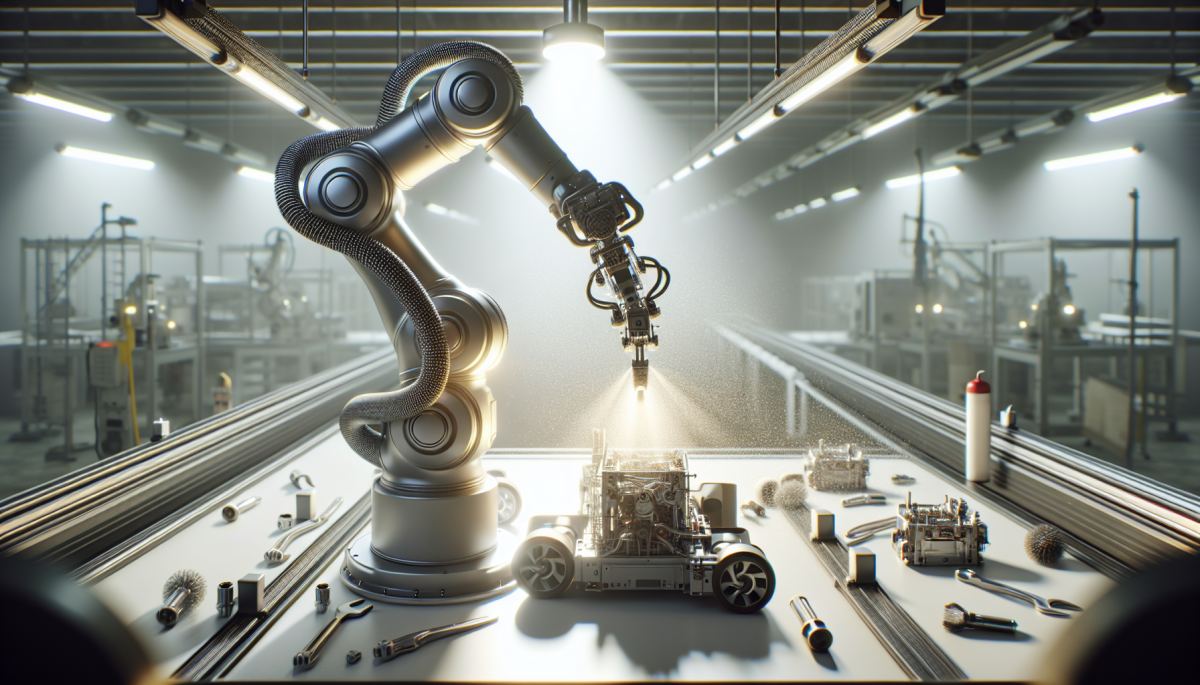

Physical AI startup Machina Labs, alongside a growing cluster of robotics researchers, has been pushing toward what the field calls generalist manipulation, the ability of a single robotic model to handle tasks it was never explicitly trained for. The latest benchmark in that pursuit comes from a model being called GEN-1, which has demonstrated 99% task reliability across a range of physical challenges including folding boxes and disassembling vacuum cleaners. More striking than the success rate is what drives it: the model can respond to mid-task disruptions and generate novel movement sequences on the fly, working through physical problems it has never encountered in training.

That last part matters enormously. Traditional industrial robots are essentially very precise, very fast prisoners of their own programming. Move the conveyor belt two inches, change the packaging material, introduce a product variant, and the whole system can fail. The cost of that brittleness is not abstract. Manufacturers routinely spend millions reconfiguring robotic lines when product designs change, and the labor required to babysit automated systems is one of the dirty secrets of factory floor economics.

Reliability statistics in robotics are notoriously slippery. A system that succeeds 95% of the time sounds impressive until you run it across a production line completing thousands of cycles per day. At that scale, a 5% failure rate is operationally catastrophic. The jump from 95% to 99% is not incremental; it is the difference between a research curiosity and something a plant manager might actually stake a production schedule on.

What makes GEN-1's architecture interesting from a systems perspective is its handling of disruption. Most robotic learning models are trained in simulation or controlled lab environments, which creates a well-documented problem called the sim-to-real gap. The real world is messier, lighting changes, objects shift, surfaces vary in friction, and models trained in clean conditions often degrade sharply when deployed. A model that can recognize when something has gone wrong mid-task and recalculate its approach is essentially building a feedback loop into its own execution, something closer to how a human technician thinks than how a traditional robot operates.

This capacity for in-task recovery also changes the economics of deployment. If a robot can handle unexpected variation without halting and requiring human intervention, the ratio of machines to human supervisors can shift dramatically. That is where the second-order effects start to accumulate in ways that go well beyond the factory floor.

The implications of reliable generalist robots ripple outward in ways that are easy to underestimate. Labor markets in logistics, light manufacturing, and appliance repair are among the first in the path. The vacuum repair demonstration is telling precisely because appliance servicing is a sector that has long resisted automation, it requires handling unfamiliar objects, diagnosing mechanical states, and applying variable force in tight spaces. These are exactly the conditions that have historically required human hands.

There is also a feedback dynamic worth watching in the training data ecosystem. Generalist models improve as they encounter more varied physical tasks, which means early commercial deployments are not just revenue events; they are data collection events. Companies that deploy these systems at scale will accumulate proprietary libraries of real-world manipulation data that smaller competitors or academic labs simply cannot replicate. The gap between frontier physical AI and everyone else could widen faster than the gap did in large language models, because physical data is harder to scrape from the internet.

Regulatory frameworks are nowhere near ready for this. Workplace safety standards, liability regimes for autonomous systems, and labor adjustment policies were all written for a world where robots were predictable and bounded. A robot that improvises introduces new categories of risk that existing rules do not cleanly address.

The 99% reliability figure will almost certainly be surpassed within a few years. The more durable question is what institutional infrastructure, legal, economic, and social, gets built alongside the technology, and whether it keeps pace with the machines that are, increasingly, learning to keep pace with us.

References

- Brohan et al. (2023) — RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control

- Peng et al. (2018) — Sim-to-Real Transfer of Robotic Control with Dynamics Randomization

- Zhao et al. (2023) — Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware

- International Federation of Robotics (2023) — World Robotics Report

Discussion (0)

Be the first to comment.

Leave a comment